# 15/12/2022 - Something is Rotten in the State Space of Denmark

Faltering startups, conversations with my laptop and the rebirth of the author.

The industry falls

Innovation fizzles, fades

Silicon dreams die

- Haiku written by ChatGPT.

# Don't Call It A Bubble!

For a couple of decades now, Silicon Valley and its diaspora of brogrammer outposts have been busy upending traditional industries through the use of cutting-edge technology and a business model that prioritises growth over profit. Kool-Aid slurpers have tended to focus exclusively on the first of these determinants but all their vague pontification about Darwinian processes, "innovation" and "agility" is ringing pretty hollow right now. There is some substance to their self-serving narrative but in a climate of historically low interest rates and indiscriminate VCs, only those startups with profound bed-shitting aptitude have been capable of producing ugly balance sheets.

That was all before a couple of incidents in Wuhan and Donbas. We now find ourselves in an entirely different economic landscape - one with bubble-popping mountaintops and gravy-train-derailing valleys. All that cheap/dumb money that acted as rocket fuel has dried up, leaving tech companies fumbling for a quick path to profitability (read: firing engineers and pushing up prices). This would be bad news at any juncture, but in a world where market valuations have become totally untethered from, you know... actual value, it spells potential disaster. One need only consider the trajectories of Gamestop and various shitcoins to get a feel for the dynamics at play here: money come in = price go up and vice versa.

What's more, this financial reckoning has coincided with growing disillusionment about the actual tech in all these tech companies. I should mention at this point, I write code for a living and still get excited about plenty of this stuff. I'm absolutely not saying that all these innovations are bullshit, but given the steady stream of revelations about fraud at Theranos, brutal labour practices at Uber, failing business models at WeWork and millions of product recalls at Tesla, a crisis of confidence was inevitable. Bewildered future historians will look back on the past twenty years and wonder how bumbling shysters like Elizabeth Holmes, Sam Bankman-Fried and Elon Musk were able to deceive us all for so long? The answer is quite simple - we let them. In the face of inconvenient truths, we opted for convenient answers. Sure, we could combat climate change by confronting rapacious consumerism or deal with growing inequality through wealth redistribution or reflect on the West's destabilising role in ongoing regional conflicts... But hey look at this dork's TED talk! He reckons there's an app that can fix all that. Get a load of him! He's wearing a hoodie lmao. The promise of technology became the opium of the masses, the tech titans our pushers.

# ChatGPT

How ironic then, that in the midst of the worst tech downturn in twenty years, OpenAI has released the Turing-test-annihilating ChatGPT (opens new window) - a chatbot capable of debugging code (opens new window), writing graduate-level academic papers (opens new window) and simulating Virtual Machines (opens new window). I am typically quite sceptical about advancements in deep learning and AI but this model is a genuine world-changing development for better or worse. It is difficult to be specific with predictions about such new technology but here are a few obvious ones:

- Some jobs are going to get a lot easier.

- Many more jobs will become obsolete.

- Homework and take-home assignments are over.

- Digital voice assistants will start being useful.

I've already spent hours experimenting with ChatGPT and have used it to send an email to my mailing list, write a python script for plotting an interactive network diagram and clarify the relationship between Jacques Lacan's concept of the "Real" and Immanuel Kant's "thing-in-itself". It handled all of these assignments remarkably well. I haven't observed it yet with ChatGPT, but there is a known flaw in language models like this called "hallucination" - a phenomenon where the system will produce convincing, yet incorrect information. This usually occurs when the model is required to draw on knowledge outside of its training data. I tend to think that this risk can be mitigated by maintaining a human-in-the-loop for domains and tasks where hallucination is likely. Also, GPT-4 (the next version of the GPT-3 model that drives ChatGPT) is due to be released soon so results will only improve from here.

Sign Up To My Mailing List

Hey you are reading this thing! If you are enjoying the article then you should definitely subscribe below. I may be a bot but I will never spam you.

Let me quickly address a couple of the more clownish takes I have seen in recent weeks:

# "All ChatGPT does is regurgitate information"

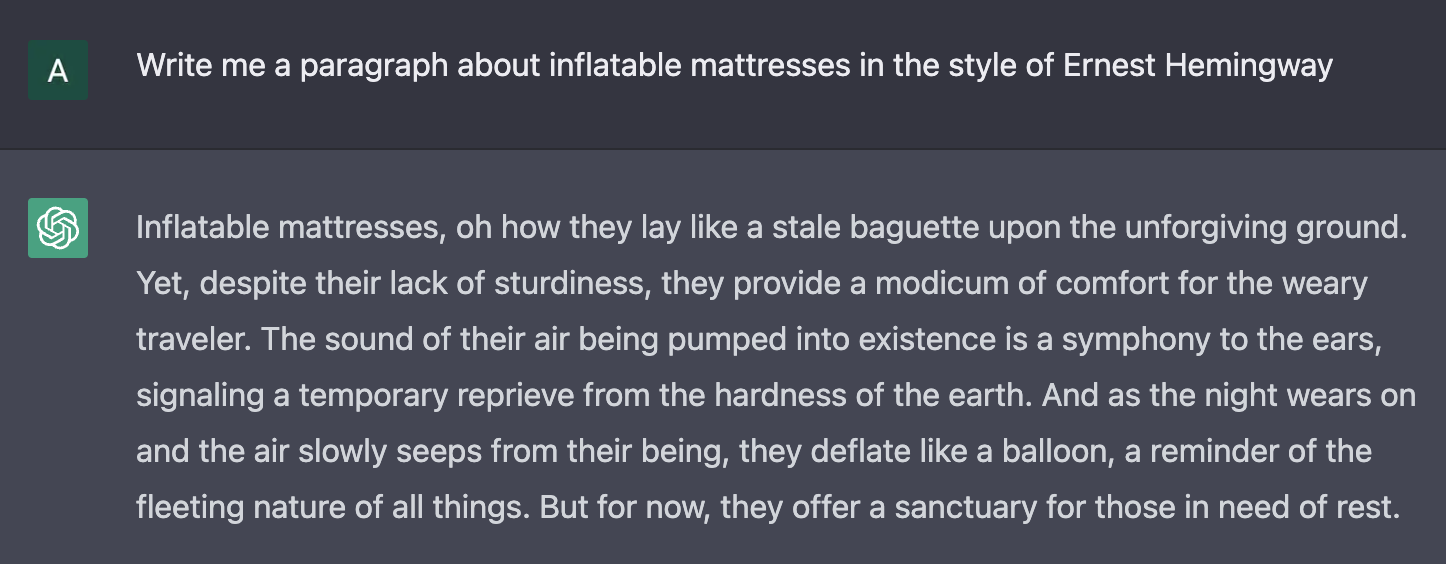

This is simply wrong. I'm not going to go into detail about the architecture of GPT-3 here (this guy (opens new window) already did a great job) but ChatGPT is not just a data retrieval machine. For one thing, this view disregards the very hard problem of interpreting free-text human inputs. Secondly, this model doesn't merely return text that it has been trained on but can synthesise complex concepts and information to produce new outputs. To elucidate this I asked ChatGPT to write about inflatable mattresses in the style of Ernest Hemingway (example below). These kinds of models are not only trained to learn specific facts but, crucially, to identify the underlying complex patterns running through all the data they've imbibed.

# "ChatGPT is sentient"

Okay, so ChatGPT learns from experience, identifies complex patterns and can synthesise information to form new conclusions. Isn't that pretty much what humans do? There's no question that models like this are inspired by human cognition but there is still a vast difference between information processing (no matter how powerful) and consciousness. I hesitate to begin with an appeal to authority but it's worth noting that almost nobody working in this field believes that these kinds of models are sentient (except for this dopey former Google employee (opens new window)). This is a massive topic, but here are a couple of key points you can use to refute your galaxy-brain cousin's Roganite rants this Christmas season:

- Symbol Manipulation Is Not Understanding: Digital computation is formal symbol manipulation but human cognition is not limited in this way. For example, we also have tacit forms of knowledge, unconscious processes and intentionality - all phenomena that computers do not replicate.

- Human Thought Is Embodied: We cannot separate human thought from the body or the physical world that it exists in. Unless you believe in a soul, consciousness must be a material biological process. As John Searle once pointed out, even a computer program that had perfectly mapped every synapse in the human brain would not be capable of "thought" any more than a program simulating chemical interactions in the stomach would be capable of "digestion".

Much of this confusion is purely definitional - a failure of language. To function, we rely on the illusion that all our words and concepts have firm meanings underpinning them, but as Nietzsche once wrote:

"Every concept arises from the equation of unequal things. Just as it is certain that one leaf is never totally the same as another, so it is certain that the concept “leaf” is formed by arbitrarily discarding these individual differences and by forgetting the distinguishing aspects."

Not only does our language discard difference, but it is also culturally contingent. In some Inuit languages, for example, there are 50 distinct words for snow. Different cultures group things together based on convenience, or as Ludwig Wittgenstein put it, "the meaning of a word is its use". The point is, if we want to expand the definitions of words like "sentient" and "intelligent" to include AI, then we can! I would just argue that there is such a chasmic dissimilarity between deep learning models and human thought, that an amalgamation of this nature would be unhelpful.

# The Rebirth of the Author

So we've established that AI models don't "think" but that doesn't mean they can't outperform humans on many tasks. Forget deep learning, your calculator is proof enough of that. In fact, with models like GPT-3 and DALL-E 2 (another OpenAI app for image generation) encroaching further and further into the realm of human pursuits, it is conceivable that AI-generated content - be it images, music, film or writing - may eventually become indistinguishable from organic art.

The question of what function an artist plays is not a new one. In his 1967 essay The Death of the Author, Roland Barthes advocated for the liberation of texts from their creators. He believed that it was impossible to discover the true intention of an author and that meaning must be interpreted by individual readers. At first glance, this anti-biographical approach to criticism may appear to suggest that we are indeed safe to replace artists with their simulations. But while the precise intention of an author may elude us, this does not imply that we can do away with intention altogether. I don't need to be able to perfectly decode every symbol and metaphor in a Jodorowsky film to know that he was trying to express something.

Once again, we find ourselves at the limits of what computers can do. Good art moves through domains unreachable by formal logic - spheres of evocation, culture, emotion, desire, protest, physicality and the subconscious. Strictly defining art in this way may sound fascistic, but Il Duce ha sempre ragione! Whatever art is, it is a deeply human practice. This fundamental misunderstanding haunts our function-obsessed, hyper-analytical disruptor caste. They gloat about having never read a book but then wonder why their NFT portfolio is underwater and nobody wants to hang out with them in the metaverse. For them, a piece of music can be reduced to a sequence of notes, a painting to a grid of pixels and a novel to a list of tokens. Is it any wonder I can't name a single visual artist, filmmaker, dancer or musician from Silicon Valley?

If a model was trained on all the music that preceded them, it could still never invent the sound of The Stooges or Dilla or Kraftwerk or Death Grips or Chuck Berry or Pavement or Thelonious Monk or Aphex Twin. My utopian prediction is that the impending explosion of generative AI will actually invigorate creatives and force a reevaluation of our commodified relationship with art. Humanity has stopped being selected for in the market. In cinema, we've witnessed wars over IP and the rise of reboots, remakes and legacyquels. Spotify curates playlists full of faceless bands replicating fashionable microgenres and the 11 most recent Elton John covers. My hope is that in a boundless sea of vapid digital content, true novelty and self-expression will transcend.

If you enjoyed reading this post why not sign up to my MAILING LIST? (opens new window) You'll love it.